[Reference /引用はこちら]

Chengshuo Xia*, Ayane Saito*, Yuta Sugiura, Using the Virtual Data-driven Measurement to Support the Prototyping of Hand Gesture Recognition Interface with Distance Sensor, Sensors and Actuators A: Physical, * these authors contributed equally.[DOI]

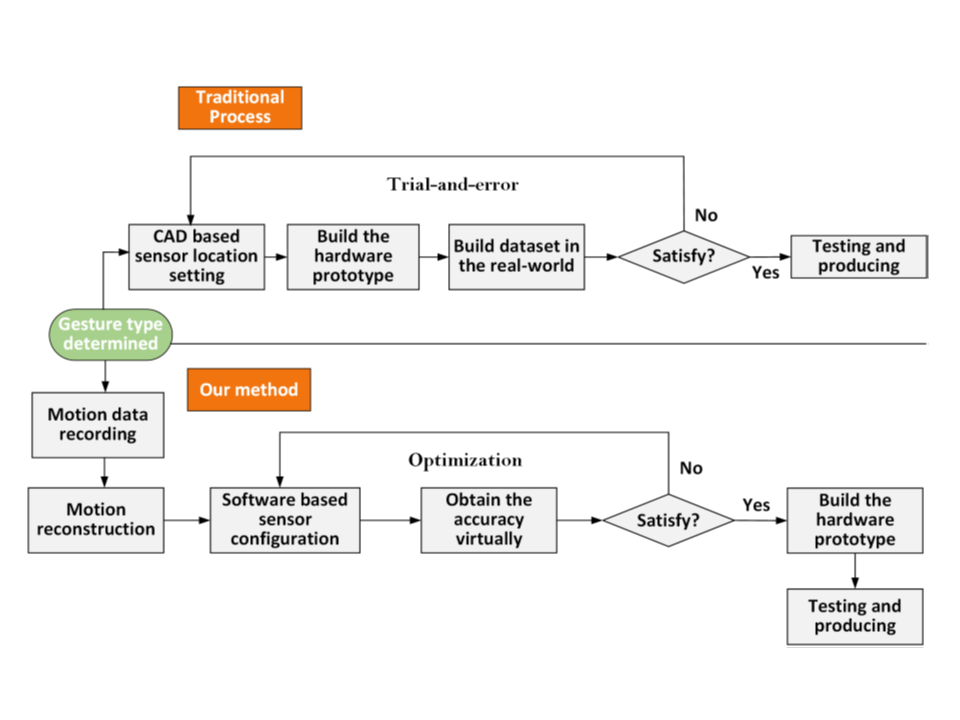

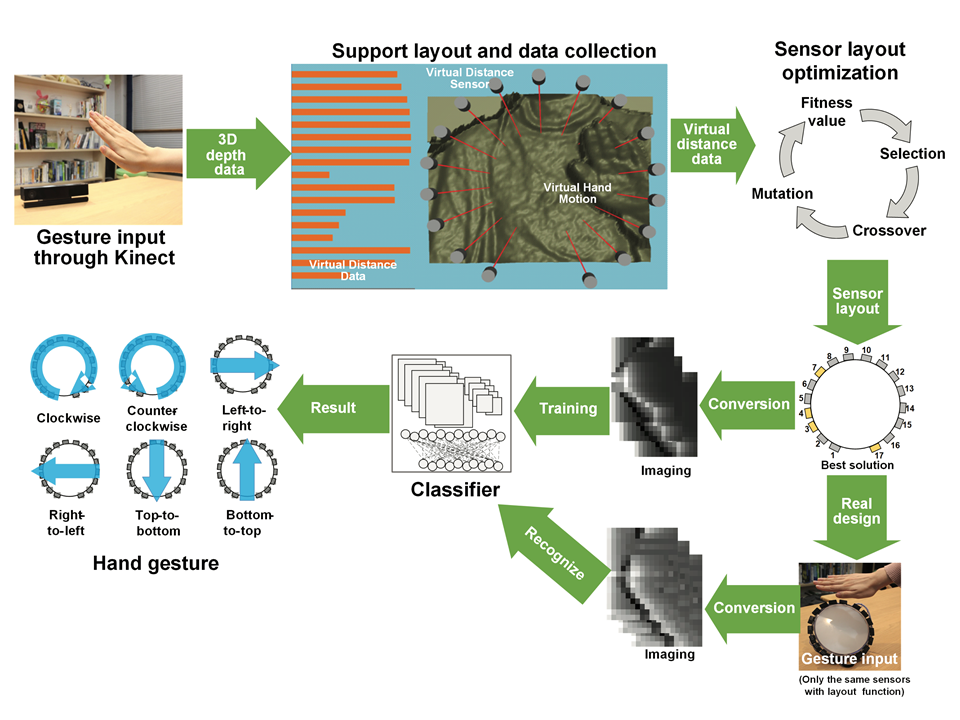

センサを用いたハンドジェスチャ認識システムの性能は、一般的にセンサの位置とその個数に影響される。従来のハンドジェスチャ認識システムの開発の方法では、センサの事前配置、データ収集、モデル学習というプロセスを経るため、どこに何個のセンサを配置するかの計算に非常に時間とコストがかかり、システムの開発を試行錯誤をする柔軟性が低いという問題があった。本論文では、距離センサを用いたジェスチャー認識インタフェースのプロトタイピングを支援する新しい開発フローを提案する。設計したシステムでは、ジェスチャを認識するためのセンサの位置や個数をシミュレーションすることができた。ソフトウェア上で再構成された手の動きを用いて、バーチャルな距離センサがバーチャルな信号を生成し、これを深層学習させた。実環境では、同じセンサ配置でジェスチャデータを少数取得し、これとバーチャルなデータセットと組み合わせて転送学習をさせる。提案手法は、センサの配置決定を効率化でき、少数の実世界データでもバーチャルなセンサデータと組み合わせることで分類器を生成できるため、開発コストを効果的に削減することができた。距離センサを用いた提案手法の2つのプロトタイプインタフェースを評価し、システムが効果的に配置推奨を行うこと、バーチャルな計測データを用いて学習したモデルが実際のジェスチャーを効果的に認識することを検証した。

The performance of a fixed-sensor-based hand gesture recognition system is typically influenced by the position and number of sensors. The traditional development approach to hand gesture recognition systems follows a process of sensor pre-deployment, data collection, and model training, which is highly time-consuming and expensive to calculate how many sensors to place where, and the system has low secondary development flexibility. In this paper, we present a new development flow to assist in prototyping distance sensor-based gesture recognition interfaces. The designed system was able to simulate the position and number of sensors to recognize gestures. Using a reconstructed hand motion, the virtual distance sensor generated simulated signals and trained a convolutional neural network model. In a real-world setting, the sensor system only needed to be configured by transfer learning to recognize gestures at the same sensor layout. The proposed method was able to indicate sensor configuration and the trained classifier via virtual distance data, which can effectively reduce the development cost. We evaluated two prototype interfaces of the proposed method using distance sensors and demonstrated that the system effectively provided deployment recommendations, and models trained using virtual measurement data could effectively recognize real gestures.