データ駆動型実世界計測におけるセンサ配置とデータ収集を支援するシステムの開発

Software to Support Layout and Data Collection for Machine-Learning-Based Real-World Sensors

2019

齋藤彩音,河合航,杉浦裕太

Ayane Saito, Wataru Kawai, Yuta Sugiura

[Reference /引用はこちら]

Ayane Saito, Wataru Kawai, Yuta Sugiura. (2019) Software to Support Layout and Data Collection for Machine-Learning-Based Real-World Sensors. In: Stephanidis C. (eds) HCI International 2019 – Posters. HCII 2019. Communications in Computer and Information Science, vol 1033. Springer, Cham. [DOI]

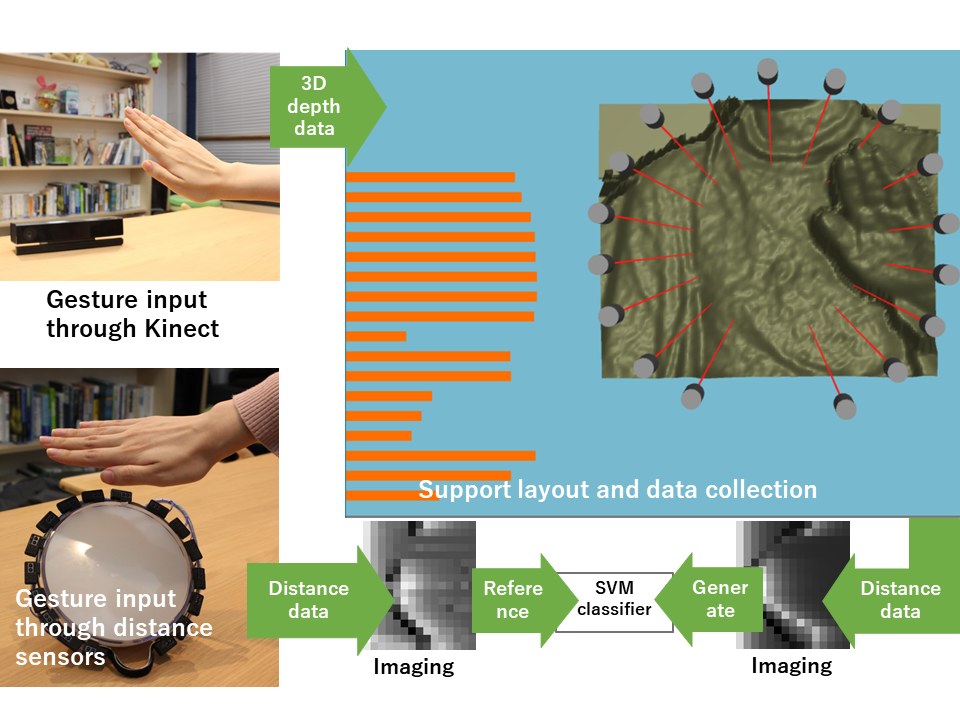

エンタテインメントシステムを構築する上で重要となる実世界センサデバイスは配置や個数、計測したい動作によって測定結果が変化することからセンサ配置を検討することが重要である。しかし、センサ配置の検討や実世界での学習データの蓄積には手間がかかる。本研究では、実世界センサの配置をデザインできるシミュレータを開発した。シミュレータ上で、Kinect を用いて記録した実世界の変形と自由に配置されたセンサとの距離を計測しジェスチャ識別器を生成した。その識別器を用いて実世界に配置したセンサでのジェスチャ識別を行った。

There have been many studies of gesture recognition and posture esti-mation by combining real-world sensor and machine learning. In such situations, it is important to consider the sensor layout because the measurement result var-ies depending on the layout and the number of sensors as well as the motion to be measured. However, it takes time and effort to prototype devices multiple times in order to find a sensor layout that has high identification accuracy. Also, although it is necessary to acquire learning data for recognizing gestures, it takes time to get the data when the user changes the sensor layout. In this study, we developed software that can arrange real-world sensors. In this time, the software can handle distance-measuring sensors as real-world sensors. The user places these sensors freely in the software. The software measures the distance between the sensors and a mesh created from measurements of real-world deformation recorded by a Kinect. The classifier is generated using the time-series of distance data recorded by the software. In addition, we created a physical device that had the same sensor layout as the one designed with the software. We experimentally confirmed that the software could recognize the gestures on the physical device by using the generated classifier.