ウェアラブルデバイス利用時にユーザ内もしくはユーザ間で適応可能なフレームワーク

Intra-/inter-user adaptation framework for wearable gesture sensing device

2018

菊井浩祐,伊藤勇太,山田誠,杉浦裕太,杉本麻樹

Kosuke Kikui, Yuta Itoh, Makoto Yamada, Yuta Sugiura, Maki Sugimoto

[Reference /引用はこちら]

Kosuke Kikui, Yuta Itoh, Makoto Yamada, Yuta Sugiura, and Maki Sugimoto, Intra-/inter-user adaptation framework for wearable gesture sensing device, In Proceedings of the 2018 ACM International Symposium on Wearable Computers (ISWC ’18), ACM, 21-24, September 8-12, 2018, Singapore. [DOI]

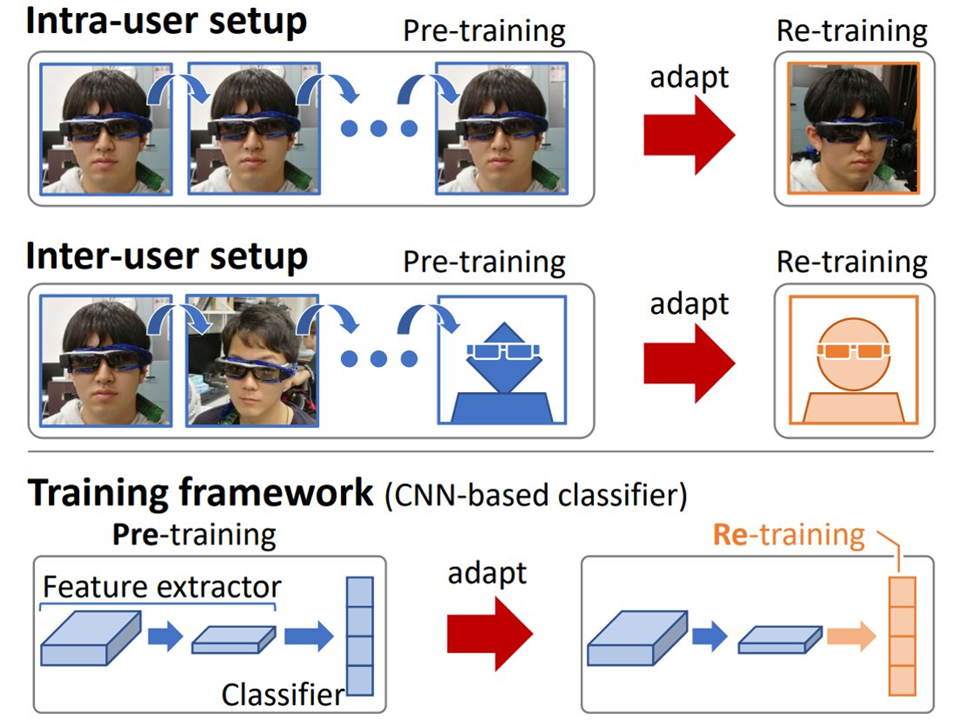

The photo reflective sensor (PRS), a tiny distant-measurement module, is a popular electronic component widely used in wearable user-interfaces. An unavoidable issue of such wearable PRS devices in practical use is the need of user-independent training to have high gesture recognition accuracy. Each new user has to re-train a device by providing new training data (we call the inter-user setup). Even worse, re-training is also necessary ideally every time when the same user re-wears the device (we call the intra-user setup). In this paper, we propose a domain adaptation framework to reduce this training cost of users. Specifically, we adapt a pre-trained convolutional neural network (CNN) for both inter-user and intra-user setups to maintain the recognition accuracy high. We demonstrate, with an actual PRS device, that our framework significantly improves the average classification accuracy of the intra-user and inter-user setups up to 87.43% and 80.06% against the baseline (non-adapted) setups with the accuracy 68.96% and 63.26% respectively.